In September Tom and Reinout traveled to Berlin to attend the SMX Advanced Europe conference. There they gained more knowledge about the latest trends in PPC and SEO. The event was not only a source of valuable knowledge, but also offered excellent networking opportunities. In this blog post we highlight some of the key insights and experiences that Tom and Reinout gained during this event.

Entities are the past: Search is going multidimensional

– Tom Anthony

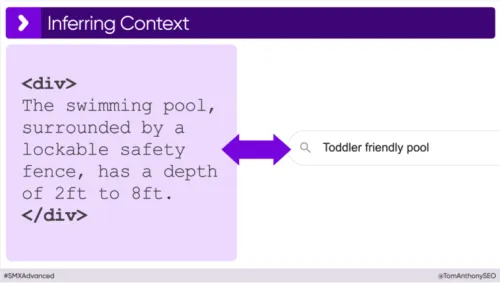

The main focus of this session was on the impact of the rise of AI on how Google reads websites. There is a shift taking place from keywords to entities. While keywords were the traditional method by which search engines interpreted content, entities provide deeper insight into the meaning and context of content. Thanks to the use of LLMs (Large Language Models), Google can not only better understand the content on websites, but also make connections between the different information on a website and the search intent of a query.

As an example, Tom pointed out that it is currently impossible to label a product, such as a children's swimming pool, as 'child-friendly' via product structured data from schema.org. However, an LLM can easily conclude from a product description like 'The pool, surrounded by a lockable safety fence, has a depth of 60 cm to 240 cm,' that the pool is a suitable result for the search query 'child-friendly pool'. Where structured data cannot make this connection, an LLM can.

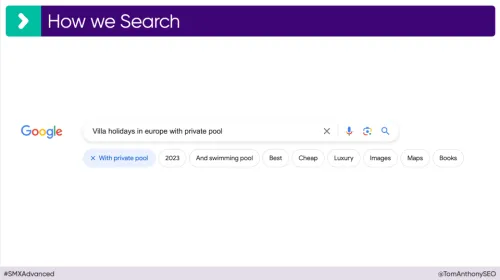

The focus is thus shifting to context-rich pages. Google is currently testing adding buttons to the search bar to specify search queries. This puts increasing emphasis on longtail keywords, as searches become more specific and thus more specific content is demanded.

To conclude, Tom shared his vision on the future of SEO. He emphasizes that with the rise of LLMs, the importance of structured data decreases and the emphasis will be more on context-rich content on web pages, which is also evident from Google's various content updates.

Key learnings from the presentation:

- The importance of longtail keywords increases in keyword strategies.

- The use of structured data markup becomes less important in the long term.

- Integrating context-rich content becomes increasingly essential.

- The emphasis on E.E.A.T. (Experience, Expertise, Authoritativeness, Trustworthiness) increases.

Advanced tactics for using AI tools & big data analysis to improve EEAT

– Lily Ray

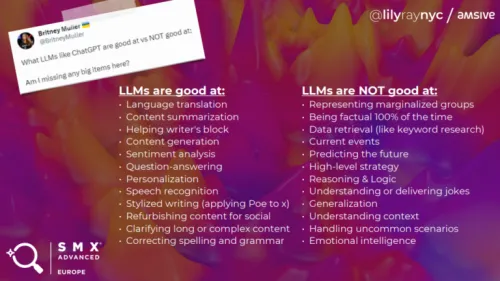

When looking for ways to optimize your content and website, the use of AI tools has become indispensable. Yet often more focus is placed on the quantity that AI delivers than on the quality it can provide. During her presentation, Lily Ray shared a series of insights and practical tips about how to best use AI to improve the quality of your online content.

What are the risks of using AI

AI is very capable at performing certain tasks, but is sometimes also used for tasks that are better performed by humans. This can cause various problems:

- Content containing incorrect information.

- Copyright issues.

- Cybersecurity challenges.

- Content based on outdated data (for example, ChatGPT has a database that only contains information up to September 2021).

What can you use AI for?

Besides the risks of using AI, Lily gave several practical examples of what AI can be used for. For SEO specialists in particular, it was encouraged to have AI perform the following tasks:

1. Content Analysis

One of the examples Lily cited in her presentation involved using the ChatGPT plugin WebPilot. With this, she analyzed multiple URLs to gain insight into content differences and determine where improvements are possible compared to competitors. After analyzing the content, she asked questions such as:

- What content is present on page A but not on page B? (information gain)

- Do the pages contain different levels of experience, expertise, authority and trustworthiness (E.E.A.T.)? If so, why?

- How can page A be improved to provide more comprehensive information than page B?

Moreover, it is possible to compare your website with Google guidelines or recent updates. ChatGPT can analyze this information and provide insights into where your content does not meet Google guidelines and how it can be improved.

2. Data Analysis

With the Advanced Data Analysis function within ChatGPT, it is possible to analyze large amounts of data and draw conclusions from it. Lily gave an example of how Search Console data can be analyzed to cluster the number of clicks and impressions per subgroup. This quickly makes clear which subgroups generate the most clicks and impressions.

The next step is visualizing this data. Thanks to the Diagrams show me plugin within ChatGPT, the analyzed data can be visually displayed. This not only makes analyzing easier but also helps to present the results more clearly to clients.

3. Generating Schema based on your page content

The Webpilot plugin of ChatGPT makes it possible to automatically generate structured data. Webpilot analyzes the existing information on your website and creates appropriate structured data for it. Lily illustrated this with an example where Person Structured Data was generated, solely based on a URL. This makes generating structured data extremely simple. However, it is important to always check this automatically generated data once more before publishing it online.

Key learnings from the presentation:

The recommended use of AI for SEO:

- Content Analysis: Use AI to compare and improve content on different URLs, and assess aspects such as experience, expertise, authority and trustworthiness (E.E.A.T.).

- Data Analysis: Deploy AI for analyzing large volumes of data, such as data from Search Console, to gain insights about clicks and impressions. Then use tools to visualize this data.

- Generating Schema Structured Data: Utilize AI for creating structured data based on page content, and ensure thorough validation before publishing.

Is there such a thing as too much crawling?

– Joost de Valk

Joost de Valk, known for his Yoast plugin and an authority in the SEO world, concluded the first day of the conference with a powerful plea for environmental protection. He emphasized the responsibility that SEO specialists carry in this respect. According to him, the internet will be responsible for a fifth of global electricity consumption by 2025. Furthermore, he cited calculations from Cloudflare's Radar, indicating that 28% of web traffic will consist of bot traffic. This means that as much as 5% of global electricity is consumed by bots.

An example of this

Google crawls more than just basic URLs. Joost illustrated this with the example of his father's website. Although this website appears to consist of only 5 main pages and 30 blog pages at first glance, it actually encompasses much more. There are 60 tag pages, 5 blog category pages, between 30 and 50 date-related pages, and at least one author archive page. Moreover, each page has its own RSS feed. Additionally, the site contains CSS files, JavaScript files, shortlinks, embedded URLs and more. In total, this seemingly small website of 35 pages actually has more than 300 different pages that are all crawled by Google.

How do we limit crawling on the website?

By using log files, many resources and URLs can be identified that are being called but are not necessary. It is important to intervene by limiting the production of these resources and restricting access by certain bots. For many websites, it is not relevant for search engines like Baidu (China) or Seznam (Czech Republic) to crawl their content. Limiting archive pages of dates and authors has recently become possible in the Yoast plugin.

Various solutions to reduce unnecessary crawling by bots and the creation of extra URLs are:

- Disable author and date archives.

- Disable image URLs.

- Minimize RSS feed.

- Check your log files.

- Block unnecessary bots (like Baidu and Seznam).

- Build faster websites.

Why we need a smarter keyword taxonomy (for localized searches)

– David Mihm

David started his presentation with various examples showing the variation in SERP (Search Engine Results Page) results, depending on the location where the search is performed. As an example, he took the keyword 'Knee surgeon', which was searched for in Berlin, Munich and Hamburg. With each search, the SERP differed significantly. In Berlin, no local pack was visible, in Munich it appeared after the first three search results, and in Hamburg only a Google Business Profile was shown. However, he emphasized that current SEO tools do not take these different SERPs into account.

David demonstrated that setting up your own procedure for SEO pays off. Among other things, he investigates the occurrence of different domains in various SERPs to analyze competition. He classifies domains based on type and size to exclude those SERPs where local businesses stand no chance. He also assesses the opportunities on a SERP depending on the position or presence of a Local Pack. If the Local Pack is at the top of a SERP, the focus is on optimizing the Google business profile. If the Local Pack is at the bottom or missing, he focuses on improving the website for relevant search terms.

For calculating the potential of each local search term, he has developed a scraping method that uses pleber.com for local keywords. He then applies various filters and groupings to arrive at a potential calculation. The complete instructions and an example in a Google Sheet can be found on his website https://www.davidmihm.com/smxberlin.

Key learnings from the presentation:

- Regional Variation in SERP Results: Keywords yield different SERP results in different regions.

- Data-driven Keyword Selection: Analyze data to determine which keywords are most interesting locally to focus on. This applies to both standard 'blue links' in search results and Google Business Profiles.

Dealing With User Intent in a Time Where Google Depends on AI

– Jan-Willem Bobbink

Jan-Willem concluded the SMX Advanced conference with a presentation about integrating AI into work processes. His aim was to broaden the traditional approach to search intent and develop a more realistic set of search intent categories. He emphasized that AI can only estimate the search intent of keywords to a limited extent.

User intent is often reduced to four basic categories: informational, navigational, transactional, and commercial investigation. However, a common problem when losing visibility in search engines is that Google interprets the search differently. For example, after an update, Google may prefer a guide instead of a category page, leading to a shift in the SERP, despite the user intent remaining the same within the four standard categories.

To circumvent this problem, Jan-Willem proposed developing a system that compares search queries and results. This would help in correctly assigning the right content type to keywords.

Jan-Willem has developed a structured system for optimizing SEO processes:

- Database Creation: Create a database with keywords and the URLs that rank for them. Tools like BeautifulSoup or Selenium can be used for this.

- Page Scraping and Classification: Scrape the content of the pages and classify the URLs based on content type.

- Identification of Best Scoring Content Type: Determine which type of content scores best for the given keywords.

- Determine Follow-up Actions: Set up follow-up actions, based on observed decreases or increases in rankings.

- Repeated Analysis: Perform this analysis biweekly and monthly for continuous optimization.

The scraping of the pages is not performed with a standard LLM (Large Language Model), but with an LLM specifically trained for classifying content. Step-by-step instructions for this approach can be found at https://notprovided.eu/intent.

Key learnings from the presentation:

- Outdated Standard Search Intent: The traditional categories of search intent (such as informational, navigational, transactional and commercial investigation) are no longer sufficient. They provide no insight into the type of content that Google recommends.

- Changes in User Intent: The way Google interprets searches constantly evolves. These changes affect the visibility of websites in search results.

- Mapping Changes: By using a specially trained Large Language Model (LLM), it is possible to detect these shifts in search intent. This provides valuable insights to adjust the SEO strategy accordingly.